Nate Silver is a pollster with a reputation, having correctly predicted the outcome of the 2008 American election in 49 of the 50 States. In 2012 he is giving the edge to President Obama over Governor Romney by a good margin.

There is a science to polling, and Mr. Silver knows it well enough to realize that it is not exact: all predictions come with a level of uncertainty.

But he figures that Mr. Obama has at least a 77% chance of winning the required 270 electoral college votes, even if at the same time he is predicting the President will only capture 52% of the popular vote.

The last time an incumbent sought re-election during the aftermath of a great recession was in 1936, when Franklin D. Roosevelt was challenged by the Republican Governor of Kansas, Alfred Landon.

At that time The Literary Digest magazine was the pollster to be reckoned with, having correctly predicted the winner in each presidential election since 1920, including Roosevelt’s 1932 victory.

It intended to leave nothing to chance in 1936, mailing out more than 10 million straw ballots to Americans whose addresses it culled from automobile registration lists and telephone books. In some cities it claimed to have captured a third of registered voters. Its poll gave the election to Landon by a strong margin, 55% of the vote to just 41% for Roosevelt.

The actual result: Roosevelt captured 62%, Landon took only 38%.

This is the most memorable error in the history of political polling, and in part contributed to the demise of the magazine.

Notwithstanding his reputation, Mr. Silver is an upstart who began his career analysing baseball statistics, and figured he could do just as well in politics. He is more accurately compared, not to The Literary Digest, but to George Gallup, whose name is now synonymous with political polling, and who in the 1930s was also an upstart.

A 1948 profile in Time magazine described Gallup’s evolution this way:

By 1932 Pollster Gallup was an up-and-coming expert at finding out who read what kind of toothpaste ads and why. One day he said to himself: “If it works for toothpaste, why not for politics?”

Gallup’s sample, at 50,000, was much smaller. Not only did he call the election in Roosevelt’s favour, he also, with a certain cockiness, went so far as to predict the outcome of the Digest poll, suggesting that its methods would give Landon 56% of the vote. He was off by only 1 percentage point.

There are two possible reasons why the Digest poll missed the mark.

The first is inherent in the challenge of extrapolating to a population by using information on a fraction its members. But the science of statistics helps us deal with this by attaching a degree of uncertainty to the results.

This is why we should be cautious in making too much of tomorrow’s release of the US job numbers for October by the Bureau of Labor Statistics.

The unemployment rate will be the closely watched statistic, and an upward or downward tick will have the pundits on both sides of the political divide shifting into high gear. In fact, there will likely be little of substance to say about the month to month change.

A larger sample helps narrow this sort of uncertainty. But the Literary Digest pollsters lulled themselves into a false sense of security, not fully realizing that in another sense size does not matter. Even Gallup’s sample of 50,000 was orders of magnitudes larger than the three or so thousand used nowadays.

The other reason polls can go wrong has to do with how the sample is chosen in the first place. It is crucial that it be drawn randomly from the population.

This didn’t happened in 1936. Only 2.4 million people mailed their responses back to the Digest. It is often thought the biased results were due to the fact the original 10 million were skewed to the relatively well-to-do, those more likely to have cars and telephones, and therefore more likely to vote Republican. But in fact it had as much, if not more, to do with the non-response. Those who took the time to mail back their voting intentions were more likely to be Republicans.

Pollsters are acutely aware of these twin dangers. Conducting their surveys by telephone, they are careful to include mobile phone numbers, not just land-lines, and they strive to correct for the ever-growing rate of non-response.

But Mr. Silver, who publishes his results for the New York Times on a blog called FiveThirtyEight, is not playing the same game. He is polling the pollsters, tracking every possible published poll.

The rationale is that if all of these polls are conducted scientifically using samples randomly drawn from the population, then some will overstate the share of the vote captured by Mr. Obama while others will understate it. An average of all the polls should net out these variations and produce a result with which we can be even more confident.

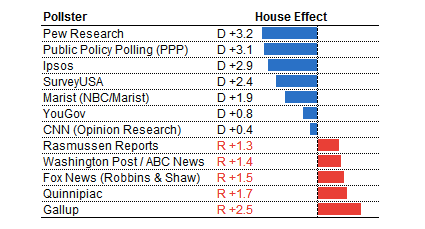

But interestingly things are not so simple. Mr. Silver also needs to make a whole host of additional corrections because he is aware of systematic differences in results between polling companies. He corrects for these using his intuition, but also a computer program he has devised. The most interesting is a “house effect“; it appears that some polling firms consistently overstate vote shares for a particular candidate.

Results from Gallup surveys seem to consistently favour the Republicans by about 2.5 percentage points; the polls conducted by Pew favour the Democrats by even more.

These systematic tendencies can easily trip up the pundits. As Mr. Silver explains:

A polling firm that tends to show favorable results for Barack Obama might survey a state one week, showing him three percentage points ahead. A different polling firm that usually has favorable results for Mitt Romney comes out with a survey in the same state the next week, showing him up by two points. Rather than indicating a change in voter preferences, these results may just reflect the different techniques and assumptions that the polling firms have applied.

And so there is a need to understand the underlying reasons for these and other differences. Mr. Silver is making a series of adjustments in a way that he claims improves the results.

The science of statistics never gives us certainties, only promising probabilities, and in the end that is all Mr. Silver is offering: about 3 to 1, maybe even 4 to 1, for Obama. But this seems a good deal more informed than the opinions of many pundits, or for that matter any one poll, including those by the firm that continues to carry the name of an upstart from two generations past.